Self-Attention Weight Matrix

A Self-Attention Weight Matrix is a Neural Network Weight Matrix that contains self-attention function values.

- AKA: Self-Attention Matrix.

- Example(s):

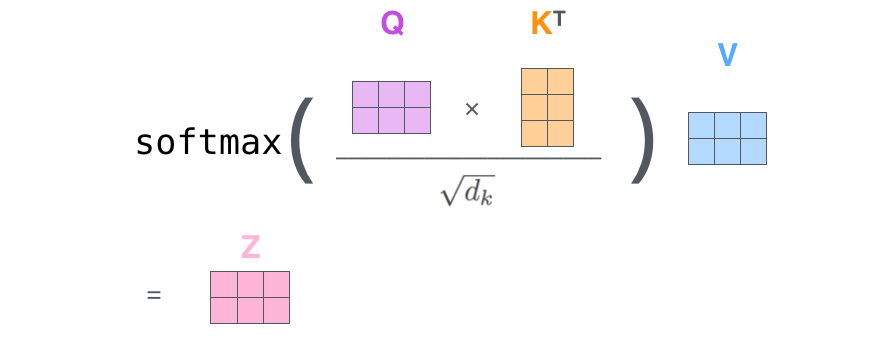

- $SelftAttention\;Matrix(Q,K,V)=softmax\left(\dfrac{QK^T}{\sqrt{d_k}}\right)V$ (e.g. Vaswani et al. 2017).

- $SelftAttention\;Matrix(Q,K,V)=\dfrac{x_{i} W^{Q}\left(x_{j} W^{K}\right)^{T}+x_{i} W^{Q}\left(a_{i j}^{K}\right)^{T}}{\sqrt{d_{z}}}$ (Shaw et al., 2018)

- …

- Counter-Example(s):

- See: Self-Attention Mechanism, Transformer Network, Seq2Seq Encoder-Decoder Neural Network, Feed-Forward Neural Network, Word Embedding, Context-based Attention Mechanism, Encoder-Decoder Attention Mechanism, Neural Machine Translation System, Statistical Machine Translation System.

References

2020a

- (GeeksforGeeks) ⇒ https://www.geeksforgeeks.org/self-attention-in-nlp/ Last Updated : 05 Sep, 2020.

- QUOTE: Self-attention was proposed by researchers at Google Research and Google Brain. It was proposed due to challenges faced by encoder-decoder in dealing with long sequences. (...)

The attention mechanism allows output to focus attention on input while producing output while the self-attention model allows inputs to interact with each other (i.e calculate attention of all other inputs wrt one input.

- QUOTE: Self-attention was proposed by researchers at Google Research and Google Brain. It was proposed due to challenges faced by encoder-decoder in dealing with long sequences.

- The first step is multiplying each of the encoder input vectors with three weights matrices $(W(Q), W(K), W(V)) that we trained during the training process. This matrix multiplication will give us three vectors for each of the input vector: the key vector, the query vector, and the value vector.

- The second step in calculating self-attention is to multiply the Query vector of the current input with the key vectors from other inputs.

- In the third step, we will divide the score by square root of dimensions of the key vector ($d_k$)(...)

- In the fourth step, we will apply the softmax function on all self-attention scores we calculated wrt the query word (here first word).

- In the fifth step, we multiply the value vector on the vector we calculated in the previous step.

- In the final step, we sum up the weighted value vectors that we got in the previous step, this will give us the self-attention output for the given word.

- The above procedure is applied to all the input sequences. Mathematically, the self-attention matrix for input matrices $(Q, K, V)$ is calculated as: $Attention\left(Q,K,V\right)=softmax\left(\dfrac{QK^T}{\sqrt{d_k}}\right)V$where $Q$, $K$, $V$ are the concatenation of query, key, and value vectors.

2020b

- (Wikipedia, 2020) ⇒ https://en.wikipedia.org/wiki/Transformer_(machine_learning_model)#Encoder Retrieved:2020-9-15.

- Each encoder consists of two major components: a self-attention mechanism and a feed-forward neural network. The self-attention mechanism takes in a set of input encodings from the previous encoder and weighs their relevance to each other to generate a set of output encodings. The feed-forward neural network then further processes each output encoding individually. These output encodings are finally passed to the next encoder as its input, as well as the decoders.

The first encoder takes positional information and embeddings of the input sequence as its input, rather than encodings. The positional information is necessary for the Transformer to make use of the order of the sequence, because no other part of the Transformer makes use of this.

- Each encoder consists of two major components: a self-attention mechanism and a feed-forward neural network. The self-attention mechanism takes in a set of input encodings from the previous encoder and weighs their relevance to each other to generate a set of output encodings. The feed-forward neural network then further processes each output encoding individually. These output encodings are finally passed to the next encoder as its input, as well as the decoders.

2020c

- (Wikipedia, 2020) ⇒ https://en.wikipedia.org/wiki/Transformer_(machine_learning_model)#Decoder Retrieved:2020-9-15.

- Each decoder consists of three major components: a self-attention mechanism, an attention mechanism over the encodings, and a feed-forward neural network. The decoder functions in a similar fashion to the encoder, but an additional attention mechanism is inserted which instead draws relevant information from the encodings generated by the encoders.

Like the first encoder, the first decoder takes positional information and embeddings of the output sequence as its input, rather than encodings. Since the transformer should not use the current or future output to predict an output though, the output sequence must be partially masked to prevent this reverse information flow. The last decoder is followed by a final linear transformation and softmax layer, to produce the output probabilities over the vocabulary.

- Each decoder consists of three major components: a self-attention mechanism, an attention mechanism over the encodings, and a feed-forward neural network. The decoder functions in a similar fashion to the encoder, but an additional attention mechanism is inserted which instead draws relevant information from the encodings generated by the encoders.

2020d

- (Celikyilmaz et al., 2020) ⇒ Asli Celikyilmaz, Elizabeth Clark, and Jianfeng Gao. (2020). “Evaluation of Text Generation: A Survey.” In: arXiv preprint arXiv:2006.14799.

2019

- (Zhang, Goodfellow et al., 2019) ⇒ Han Zhang, Ian Goodfellow, Dimitris Metaxas, and Augustus Odena. (2019). “Self-attention Generative Adversarial Networks". In: International Conference on Machine Learning, pp. 7354-7363 . PMLR,

2018a

- (Alammar, 2018) ⇒ Jay Alammar (June 27, 2018). "The Illustrated Transformer". In: Alammar Blog.

- QUOTE: Finally, since we’re dealing with matrices, we can condense steps two through six in one formula to calculate the outputs of the self-attention layer.

The self-attention calculation in matrix form

- QUOTE: Finally, since we’re dealing with matrices, we can condense steps two through six in one formula to calculate the outputs of the self-attention layer.

2018b

- (Shaw et al., 2018) ⇒ Peter Shaw, Jakob Uszkoreit, and Ashish Vaswani. (2018). “Self-Attention with Relative Position Representations.” In: Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT 2018) Volume 2 (Short Papers).

- QUOTE: We also want to avoid broadcasting relative position representations. However, both issues can be resolved by splitting the computation of eq.(4) into two terms:

- QUOTE: We also want to avoid broadcasting relative position representations. However, both issues can be resolved by splitting the computation of eq.(4) into two terms:

|

$e_{i j}=\dfrac{x_{i} W^{Q}\left(x_{j} W^{K}\right)^{T}+x_{i} W^{Q}\left(a_{i j}^{K}\right)^{T}}{\sqrt{d_{z}}}$ |

(5) |

- The first term is identical to eq.(2), and can be computed as described above. For the second term involving relative position representations, tensor reshaping can be used to compute $n$ parallel multiplications of $bh\times d_z$ and $d_z\times n$ matrices. Each matrix multiplication computes contributions to $e_{ij}$ for all heads and batches, corresponding to a particular sequence position. Further reshaping allows adding the two terms. The same approach can be used to efficiently compute eq.(3).

2017b

- (Vaswani et al., 2017) ⇒ Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. (2017). “Attention is all You Need.” In: Advances in Neural Information Processing Systems.

- QUOTE:In practice, we compute the attention function on a set of queries simultaneously, packed together into a matrix [math]\displaystyle{ Q }[/math]. The keys and values are also packed together into matrices [math]\displaystyle{ K }[/math] and [math]\displaystyle{ V }[/math]. We compute the matrix of outputs as:

| [math]\displaystyle{ Attention(Q,K,V)=\mathrm{softmax}\left(\dfrac{QK_T}{\sqrt{d_k}}\right)V }[/math] | (1) |