Character-level Neural Network Training Algorithm

Jump to navigation

Jump to search

A Character-level Neural Network Training Algorithm is a Neural Network Training Algorithm that is a Character-level NLP Algorithm.

- Context:

- It can implemented by Character-level Neural Network Training System to solve a Character-level Neural Network Training Task.

- It usually required training datasets that are character representations.

- Example(s):

- Counter-Example(s):

- a Word-level Neural Network Training Algorithm,

- See: Language Model, Seq2Seq Neural Network, Computer Character, Natural Language Processing Algorithm.

References

2016a

- (Barzdins & Gosko, 2016) ⇒ Guntis Barzdins, and Didzis Gosko. (2016). “RIGA at SemEval-2016 Task 8: Impact of Smatch Extensions and Character-Level Neural Translation on AMR Parsing Accuracy.” In: Proceedings of the 10th International Workshop on Semantic Evaluation (SemEval-2016), pp. 1143-1147.

- QUOTE: Operating seq2seq model on the character-level (Karpathy, 2015; Chung et al., 2016; Luong et al., 2016) rather than standard word-level improved smatch F1 by notable 7%. Follow-up tests (Barzdins et al., 2016) revealed that character-level translation with attention improves results only if the output is a syntactic variation of the input …

2016b

- (Chung et al., 2016) ⇒ Junyoung Chung, Kyunghyun Cho, and Yoshua Bengio. (2016). “A Character-level Decoder Without Explicit Segmentation for Neural Machine Translation.” In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (ACL-2016).

2016c

- (Hewlett et al., 2016) ⇒ Daniel Hewlett, Alexandre Lacoste, Llion Jones, Illia Polosukhin, Andrew Fandrianto, Jay Han, Matthew Kelcey, and David Berthelot. (2016). “Wikireading: A Novel Large-scale Language Understanding Task over Wikipedia.” arXiv preprint arXiv:1608.03542

- QUOTE: ... Character seq2seq model. Blocks with the same color share parameters. The same example as in Figure 1 is fed character by character. ...

- QUOTE: ... Character seq2seq model. Blocks with the same color share parameters. The same example as in Figure 1 is fed character by character. ...

2015

- (Karpathy, 2015) ⇒ Andrej Karpathy. (2015). “The Unreasonable Effectiveness of Recurrent Neural Networks.” In: Blog post 2015-05-21.

- QUOTE:

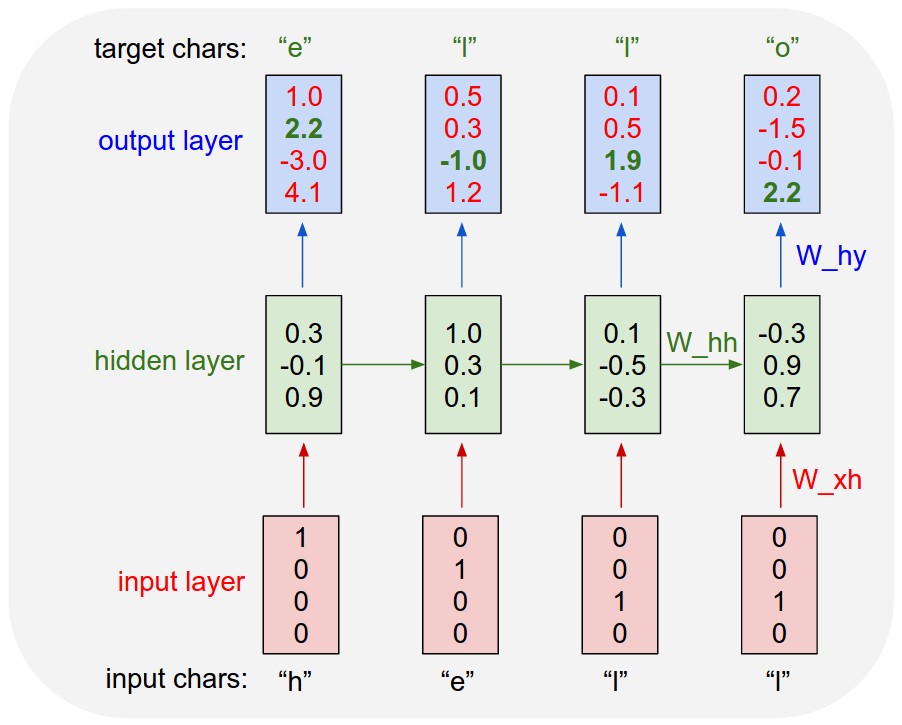

An example RNN with 4-dimensional input and output layers, and a hidden layer of 3 units (neurons). This diagram shows the activations in the forward pass when the RNN is fed the characters "hell" as input. The output layer contains confidences the RNN assigns for the next character (vocabulary is "h,e,l,o"); We want the green numbers to be high and red numbers to be low.

- QUOTE: