Software 2.0 Development Model

A Software 2.0 Development Model is a software development model that leverages ML methods (to achieve software system behavior).

- Context:

- It can (typically) use Data-Driven Development, prioritizing training over traditional coding.

- It can (typically) be applied to AI-Automated Tasks, such as computer vision and natural language processing.

- It can (typically) involve continuous data collection and model retraining to improve performance over time.

- It can (typically) involve creating and using Learning Datasets to define the software's desired behaviors and outcomes.

- It can (often) reduce human involvement in Software Coding, Software Performance Optimization, and Software Adaptation to new situations or data.

- It can (often) shift roles: Data Labelers focus on curating datasets, while Software Engineers maintain infrastructure and training code.

- It can range from being a Supervised Software 2.0 Development Approach to being an Unsupervised Software 2.0 Development Approach.

- It can produce Black-Box Software Systems, which are often less interpretable than traditional software.

- It can require Neural Network Architecture Design to process datasets and learn desired behaviors.

- ...

- Example(s):

- as proposed in (Karpathy, 2017).

- ...

- Counter-Example(s):

- a Software 1.0 Approach, with manually coded software development of explicit software requirements.

- a Software 3.0 Approach, with ...

- See: Assistant-based Software Development, Software System Testing.

References

2023

- chat

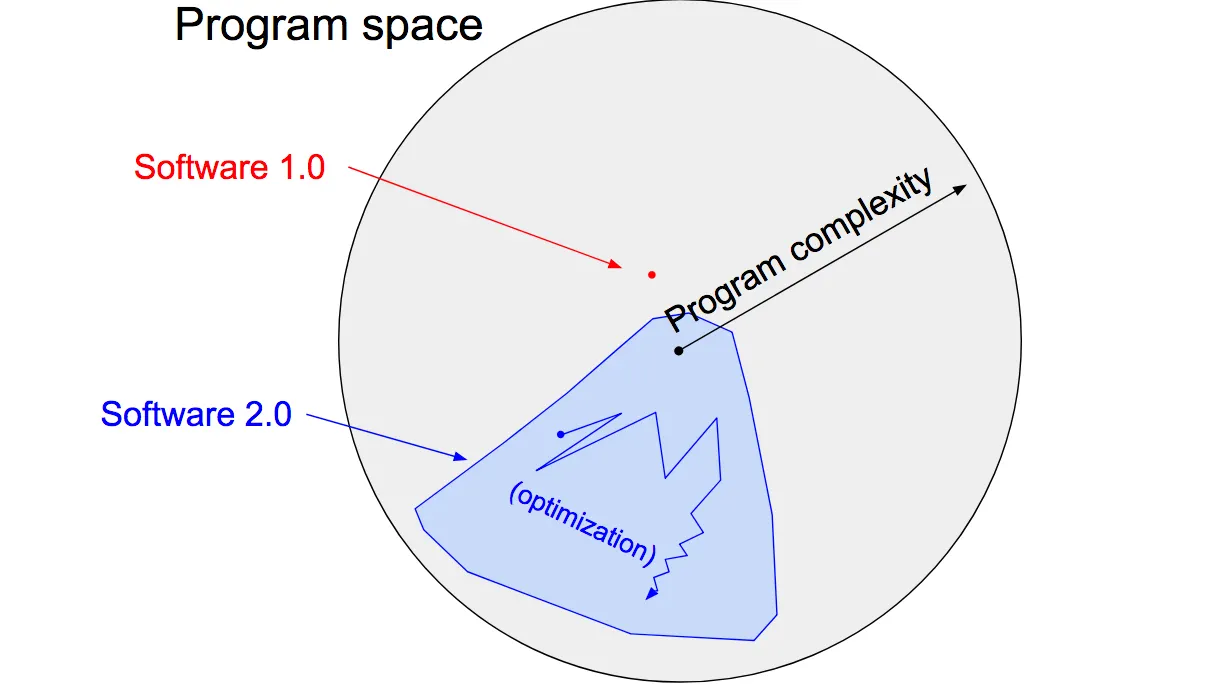

- Software 2.0 is a term coined by Andrej Karpathy, the Director of AI at Tesla, to describe the transition from traditional hand-coded software (Software 1.0) to machine learning-based software (Software 2.0). In this context, Software 1.0 refers to the conventional programming approach, where developers write explicit instructions and rules to execute specific tasks. This process can be time-consuming and error-prone, especially for complex problems.

In contrast, Software 2.0 uses machine learning algorithms and neural networks to "learn" from data and automatically generate rules or patterns without explicit instructions from a programmer. These algorithms can tackle difficult tasks using traditional programming techniques, such as image recognition, natural language processing, and autonomous driving.

The main idea behind Software 2.0 is to leverage the power of artificial intelligence to reduce human involvement in coding, optimize performance, and adapt more easily to new situations or data. This shift in the software development paradigm is expected to revolutionize various industries and create more intelligent and adaptable systems.

- Software 2.0 is a term coined by Andrej Karpathy, the Director of AI at Tesla, to describe the transition from traditional hand-coded software (Software 1.0) to machine learning-based software (Software 2.0). In this context, Software 1.0 refers to the conventional programming approach, where developers write explicit instructions and rules to execute specific tasks. This process can be time-consuming and error-prone, especially for complex problems.

2017

- (Karpathy, 2017) ⇒ Andrej Karpathy. (2017). "Software 2.0.” In: Medium, [1].

- NOTE: It highlights the distinction between traditional programming (Software 1.0) and neural network-based programming (Software 2.0).

- NOTE: It describes a Software 2.0 development process, where datasets and neural network architectures replace traditional code.

- NOTE: It predicts the future dominance of Software 2.0 in fields where traditional algorithms are challenging to design.

- NOTE: It explains the new roles and team structures emerging from the adoption of Software 2.0.

- NOTE: It suggests new tools and infrastructure to support Software 2.0 development.

- QUOTE: In contrast, Software 2.0 is written in much more abstract, human unfriendly language, such as the weights of a neural network. No human is involved in writing this code because there are a lot of weights (typical networks might have millions), and coding directly in weights is kind of hard (I tried).

Instead, our approach is to specify some goal on the behavior of a desirable program (e.g., “satisfy a dataset of input output pairs of examples”, or “win a game of Go”), write a rough skeleton of the code (i.e. a neural net architecture) that identifies a subset of program space to search, and use the computational resources at our disposal to search this space for a program that works. In the case of neural networks, we restrict the search to a continuous subset of the program space where the search process can be made (somewhat surprisingly) efficient with backpropagation and stochastic gradient descent.